From Grainy to Groundbreaking: NASA's Giant Leap in Shooting the Moon

When Neil Armstrong stepped onto the Moon in 1969, declaring his moonwalk as “one small step for a man, one giant leap for mankind,” we got to see the lunar surface through human eyes for the very first time. While the cameras used on the Apollo 11 flight mission were pioneering for their time, they could capture little more than grainy, low-resolution images. Nearly six decades later, camera technology has made its own giant leap forward, allowing us to photograph the lunar surface in vivid, immersive detail.

From Apollo to Artemis: The First Camera in Space

In those early days of lunar exploration, the images transmitted back to Earth were grainy, flickering, and often barely discernible. Equipped with Hasselblad 500EL film cameras stripped of their viewfinders, the astronauts pointed, clicked, and hoped for the best, without ever seeing a single frame of what they were capturing on film.

Fast forward to April 1, 2026, from the instant Artemis II blasted off the launch pad to its final splashdown in the Pacific Ocean nine days later, almost nothing went unseen: The liftoff. Stage separation. Four astronauts drifting weightlessly inside the Orion capsule. The quiet, intimate moments they shared. All of it unfolded in real time for the world to see.

Every second of their spaceflight journey was captured and transmitted in vivid, high-definition clarity to a world that couldn’t stop watching, and at one point, seeing something no human had ever witnessed in real time before: the far side of the Moon. Never seen live by humans in real time, the far side of the Moon emerged in stunning, crystal-clear detail with its vast, ancient expanse of craters and shadows.

The Imaging Technology That Made It All Possible

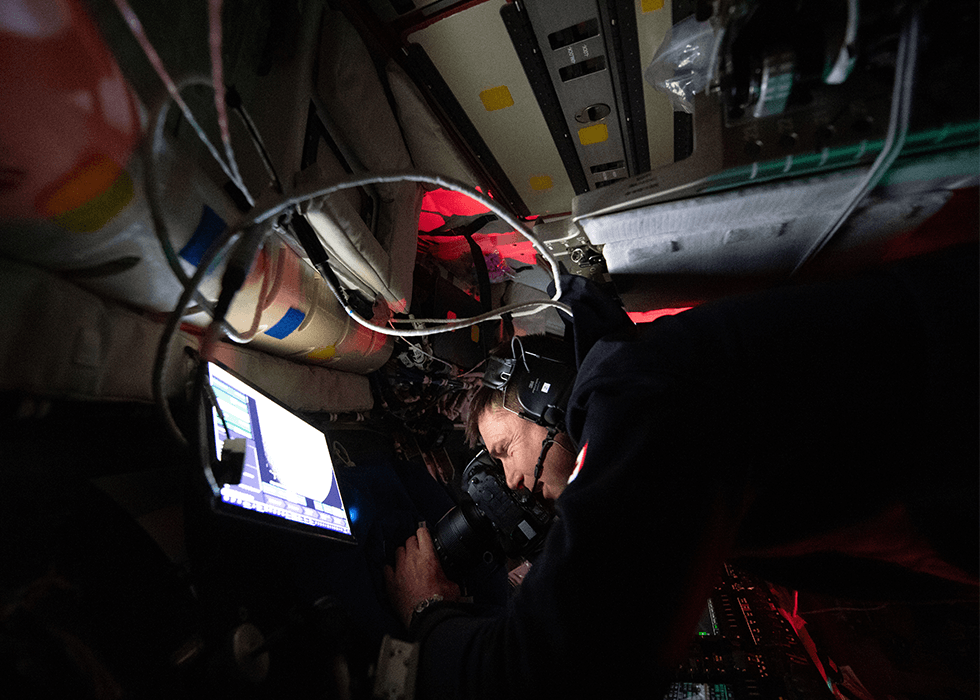

Space travel, with its violent launch, vibration, radiation, microgravity, vacuum conditions, and inability to perform complex onboard repairs, presents numerous challenges. That’s why multiple cameras, each with a defined role, were used to capture every dimension of the mission. The camera technology consisted of 32 cameras, including devices with lenses capable of capturing photos or video, inside or on the exterior of the capsule.

Of these, 15 were mounted directly to the spacecraft, and 17 were handheld operated by the crew. Fixed engineering cameras supported safety and verification protocols and monitoring activities such as liftoff, stage separation, proximity operations, and reentry.

The imaging technology was built on the principles of redundancy, functional specialization, and human storytelling, all brought to life in the camera hardware itself, where each camera in the imaging network served a specific role in capturing the mission as it was unfolding:

- GoPro Cameras (External Action Cameras): Mounted on the spacecraft’s exterior, these space-qualified cameras, used on the International Space Station, captured continuous wide-angle video of liftoff, stage separation, and in-flight operations. Their reliability in extreme conditions made them ideal for the harsh environment of space.

- Smartphones (The Crew’s Capture Devices): The astronauts themselves used the iPhone 17 for quick, spontaneous shots inside the cabin and to capture candid, human interactions.

- Nikon D5 – The Proven Workhorse: The digital single lens reflex (DSLR) D5 served as the mission’s stability anchor due to its durability and predictable performance. Excelling in low light conditions, it is capable of withstanding harsh conditions and offers tactile controls that can be used in pressurized gloves. Its role is reliability above all else.

- Nikon Z9 – The Mirrorless Next-Generation Tool: Its mirrorless design and lack of a mechanical shutter reduced a potential failure point while delivering ultra-high-resolution imaging, advanced autofocus, and 8K video capability. Its role was to test mirrorless technology in deep space, without the protection of a physical mirror/shutter assembly.

The Giant Leap In Galactic Storytelling

A balance between the proven and the possible is what defined NASA’s imaging choices on the Artemis II mission, guided by three core principles: 1) redundancy, so nothing is missed; 2) specialization, so every perspective is covered; and, 3) the human interest side, in which the four astronauts determined what matters most from a scientific and historical perspective.

While the Apollo missions of the past gave us images we had to interpret, Artemis II gave us experiences to be shared in real time. Each frame reflects how far we’ve come, not only in exploring space, but in documenting it – from the grainy uncertainty of early film to a new era of immersive storytelling that brings the entire world along on the journey.